Fingerprint technology has become an integral part of our modern world, with its applications ranging from securing devices to solving crimes. But have you ever wondered when this revolutionary technology was first invented? In this article, we will take a journey into the past to explore the origins and evolution of fingerprint technology, and discover the key figures who shaped its development.

A Brief History of Biometrics & Fingerprint Identification

The concept of biometrics, or the use of physical characteristics for identification purposes, dates back thousands of years. Ancient civilizations, such as the Egyptians and Aztecs, used handprints and footprints as a means to identify individuals. However, it wasn’t until the late 19th century that the scientific study of fingerprints began to take shape.

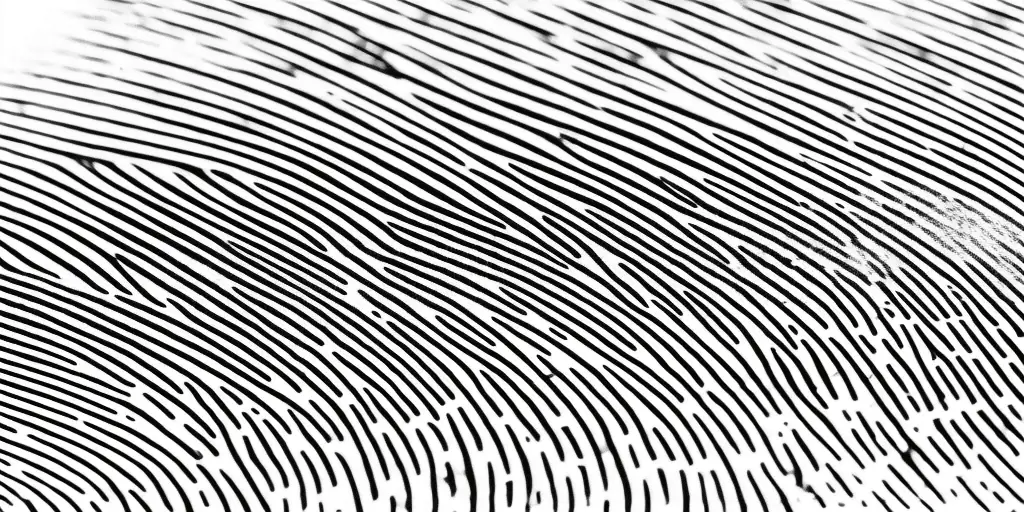

One of the pioneers in this field was Sir Francis Galton, a British scientist and cousin of Charles Darwin. Galton’s research on fingerprints laid the foundations for modern fingerprint identification. He discovered that each individual has unique ridge patterns on their fingertips that remain unchanged throughout their lifetime.

Evolution of Fingerprint Technology

The evolution of fingerprint technology can be traced back to the development of classification systems. In 1892, Sir Edward Henry, an Inspector-General of Police in India, introduced the Henry Classification System. This system categorized fingerprints based on their ridge patterns, allowing for easier identification and comparison.

Around the same time, other notable figures were also making significant contributions to the field of fingerprint identification. Juan Vucetich, an Argentinian police official, successfully used fingerprints to solve a murder case in 1892. Alphonse Bertillon, a French police officer, developed a method to combine fingerprinting with anthropometry, the study of body measurements, to create a comprehensive identification system.

Where it Began

The practical use of fingerprints as a means of identification began to gain recognition in the late 19th and early 20th centuries.

Sir Francis Galton is credited with inventing the concept of fingerprinting as a means of identification

In 1903, Sir Edward Henry’s fingerprint classification system was officially adopted by Scotland Yard, making it the first organized fingerprint bureau in the world. This marked a turning point in the history of fingerprinting, as law enforcement agencies started to recognize its potential in solving crimes.

When Was Fingerprint Technology Invented?

While the use of fingerprints for identification purposes can be traced back centuries, the invention of automated fingerprint identification systems (AFIS) marked a significant milestone in fingerprint technology. The first AFIS was developed in the 1960s by the Federal Bureau of Investigation (FBI) in the United States and in the UK.

Prior to the invention of AFIS, fingerprint identification was a laborious process that required manual matching of prints. AFIS automated this process by using computers to compare and match fingerprints against a large database, greatly speeding up the identification process.

The Growth of Biometrics

With the advent of AFIS, biometrics as a field grew rapidly. Governments and law enforcement agencies around the world started implementing fingerprint identification systems to streamline the process of criminal identification. Fingerprints became an invaluable tool in solving crimes, as they provided irrefutable evidence of an individual’s presence at a crime scene.

In addition to law enforcement applications, biometric technology found its way into various other industries. It was used for access control systems, where fingerprints replaced traditional keys and passwords. Companies started integrating biometric authentication into their devices and software, providing a more secure means of identification.

Then to Now

Over the years, fingerprint technology has continued to evolve and improve. New algorithms and hardware advancements have made fingerprint recognition more accurate and reliable than ever before. The development of palm print identification and the integration of fingerprint technology in mobile devices further expanded its applications.

One of the major advancements in recent years is the integration of fingerprint technology with face recognition. This combination of biometric modalities has created even more robust means of identification, enhancing security in various sectors, including border control and national security.

The Future of Fingerprint Technology

As technology continues to advance, the future of fingerprint technology and biometrics looks promising. The use of fingerprint technology is expected to expand beyond traditional identification systems. It has the potential to be integrated with wearable devices for continuous authentication and even used as a means of payment, eliminating the need for traditional methods like credit cards.

The development of more sophisticated algorithms and the use of artificial intelligence will further enhance the accuracy and speed of fingerprint recognition. In addition, advancements in sensor technology will allow for more advanced features, such as detecting pulse and sweat patterns, further enhancing the uniqueness of fingerprints as a means of identification.

Conclusion

The invention and subsequent evolution of fingerprint technology have revolutionized the way we identify individuals. From its humble beginnings with Sir William Herschel’s use of fingerprints as a means of identification in the 19th century to the development of automated fingerprint identification systems, fingerprints have become an indispensable tool in various industries and law enforcement agencies.

Looking ahead, we can expect fingerprint technology to continue to evolve and find new applications beyond identification systems. As advancements in biometrics reshape our digital world, fingerprints will remain a reliable and secure means of identification, ensuring the safety and security of our everyday lives.

FAQs

Q: When was fingerprint technology invented?

A: The concept of identifying people by unique fingerprints was first laid out in Europe in the late 17th century, but plausible conclusions could only be established from the mid-17th century onwards. The use of fingerprints began in India in 1858 when Sir William Herschel, Chief Magistrate of the Hooghly District in Jungipoor, used fingerprints on native contracts. In 1882, Gilbert Thompson of the U.S. Geological Survey in New Mexico used his own thumbprint on a document to help prevent forgery, marking the first known use of fingerprints in the United States. Sir Francis Galton, a British anthropologist and cousin to Charles Darwin, published the first book on fingerprints in 1892, which included a detailed statistical model of fingerprint analysis and identification.

Q: How is fingerprint technology used today?

A: Fingerprint technology is used in various applications today. It is commonly used for biometric authentication, criminal identification, and personal identification. Fingerprint evidence can be used in investigations and court cases to link individuals to a crime scene.

Q: What is the value of fingerprint evidence in criminal investigations?

A: Fingerprint evidence is highly valuable in criminal investigations. It is considered one of the most reliable forms of evidence as each person’s fingerprint is unique. By comparing a set of fingerprints found at a crime scene with a known set of fingerprints, investigators can identify or eliminate potential suspects.

Q: How is fingerprint information recorded?

A: Fingerprint information is typically recorded on a fingerprint card. Fingerprint experts use specialized techniques to capture and preserve the minutia, or unique patterns and ridges, present in a person’s fingerprints. These cards are then used for identification purposes and can be stored in a fingerprint repository for future reference.

Q: What are the main components of a fingerprinting system?

A: A fingerprinting system typically consists of a fingerprint scanner, software for image processing and analysis, and a database to store and compare fingerprints. The scanner captures the unique impressions of an individual’s fingerprints, and the software analyzes and matches these impressions with existing records in the database to facilitate positive identification.

Q: Who maintains the criminal record and fingerprint repository in the United States?

A: The criminal record and fingerprint repository in the United States are maintained by the Criminal Justice Information Services (CJIS) Division of the Federal Bureau of Investigation (FBI). This division manages the national fingerprint database, which contains millions of criminal history records.

Q: How is fingerprint evidence used in the criminal justice system?

A: Fingerprint evidence plays a crucial role in the criminal justice system. It can be used to link a suspect to a specific crime, corroborate witness testimonies, and establish a person’s criminal history. Fingerprint experts analyze and compare fingerprint impressions found at crime scenes with known records to provide valuable evidence in court.

Q: Is the use of fingerprints limited to criminal investigations?

A: No, the use of fingerprints extends beyond criminal investigations. Fingerprint technology is also used for personal identification purposes, access control to secure areas, and authentication in various industries. Many organizations and institutions rely on fingerprint recognition systems to ensure secure and accurate identification.

Q: Are fingerprint impressions always visible on surfaces?

A: No, fingerprint impressions are not always visible to the naked eye. In some cases, they may be latent or invisible without proper enhancement techniques. Fingerprint experts use specialized powders, chemical treatments, or alternate light sources to make latent fingerprints visible and suitable for analysis.

Q: Are there any organizations involved in setting standards for fingerprint technology?

A: Yes, the National Institute of Standards and Technology (NIST) is the primary organization involved in setting standards for fingerprint technology. They develop and promote the use of best practices, tools, and technologies to ensure accuracy and consistency in fingerprint analysis and identification.